Python Decorators for Production Machine Learning Engineering

In this article, you will learn how to use Python decorators to improve the reliability, observability, and efficiency of machine learning systems in production.

Topics covered include:

- Implementing retry logic with exponential backoff for unstable external dependencies.

- Validating inputs and enforcing schemas before model inference.

- Optimizing performance with caching, memory guards, and monitoring decorators.

Python Decorators for Production ML Engineering

Image by Editor

Introduction

You've probably written a decorator or two over the course of your Python career — perhaps a simple @timer to benchmark a function, or a @login_required pulled from Flask. In those contexts, decorators are a tidy convenience. In production machine learning, they become something far more critical.

Once models are live, the stakes change entirely. You're contending with flaky API calls, memory pressure from oversized tensors, upstream data that drifts without warning, and inference pipelines that need to fail gracefully at 3 AM with no one watching. The five decorator patterns covered here aren't textbook abstractions — they address real, recurring failure modes in production ML systems and will fundamentally shift how you approach writing resilient inference code.

1. Automatic Retry with Exponential Backoff

Production ML pipelines rarely run in isolation. They call model endpoints, pull embeddings from vector databases, and fetch features from remote stores. Those calls fail — networks hiccup, services throttle requests, and cold starts introduce latency spikes. Scattering try/except blocks with ad hoc retry logic throughout your codebase is a maintenance problem waiting to happen.

The @retry decorator solves this cleanly. You configure it with parameters such as max_retries, backoff_factor, and a tuple of retriable exception types. The wrapper catches those specific exceptions, introduces exponentially increasing delays between attempts, and re-raises the exception once retries are exhausted.

The key advantage is separation of concerns: your core function simply performs the call, while resilience logic lives entirely in the decorator. Retry behavior is tunable per function through decorator arguments, and the result is a codebase that stays readable as complexity grows. For model-serving endpoints prone to transient timeouts, this single pattern can be the difference between a noisy alert and a seamless recovery.

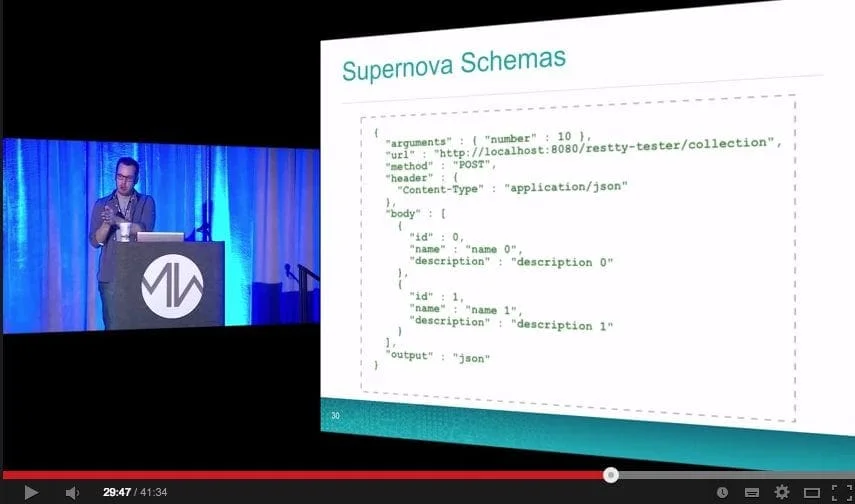

2. Input Validation and Schema Enforcement

Data quality problems are among the most insidious failure modes in ML systems. Models are trained on features with specific types, distributions, and value ranges. In production, upstream changes can quietly introduce null values, wrong data types, or malformed array shapes — and by the time degraded predictions become visible, the issue may have been live for hours.

A @validate_input decorator intercepts function arguments before they reach model logic. You can design it to verify array shapes, confirm required dictionary keys are present, or check that values fall within acceptable ranges. When validation fails, the decorator raises a descriptive error or returns a safe fallback — preventing corrupt data from propagating downstream.

This pattern integrates naturally with Pydantic for more sophisticated schema enforcement. Even a lightweight implementation that checks input shapes and types before inference, however, will head off a significant share of common production issues. Think of it as proactive defense rather than reactive debugging.

3. Result Caching with TTL

Real-time inference workloads frequently encounter duplicate inputs. The same user may hit a recommendation endpoint multiple times within a single session; a batch job may reprocess overlapping feature sets. Running a full inference pass on every request wastes compute and inflates latency unnecessarily.

A @cache_result decorator with a configurable time-to-live (TTL) stores function outputs keyed by hashed inputs. Internally, it maintains a dictionary mapping argument hashes to (result, timestamp) tuples. On each call, the wrapper checks whether a valid cached entry exists. If the entry falls within the TTL window, the cached value is returned immediately. Otherwise, the function executes and the cache updates.

The TTL parameter is what makes this production-appropriate. Predictions can go stale — particularly as underlying features evolve — so caching without an expiration policy introduces its own correctness risks. In many real-time scenarios, even a 30-second TTL can meaningfully reduce redundant computation while keeping results fresh enough to be useful.

4. Memory-Aware Execution

Large models carry significant memory overhead. When multiple models run concurrently or batch sizes grow, it's easy to exhaust available RAM and crash the service. These failures tend to be intermittent, shaped by workload variability and the unpredictability of garbage collection timing, which makes them difficult to reproduce and diagnose after the fact.

A @memory_guard decorator checks available system memory before allowing a function to execute. Using psutil to read current memory utilization, it compares the current state against a configurable threshold — say, 85% — and responds accordingly. When memory is constrained, the decorator can invoke gc.collect(), emit a warning log, delay execution, or raise a custom exception that an orchestration layer can handle upstream.

This is particularly valuable in containerized environments where memory limits are enforced strictly. Kubernetes will terminate a container that exceeds its allocation without warning. A memory guard gives your service a window to degrade gracefully or self-recover before hitting that hard ceiling.

5. Execution Logging and Monitoring

Observability in ML systems goes well beyond HTTP status codes. You need visibility into inference latency, anomalous inputs, shifting prediction distributions, and performance bottlenecks across functions. Ad hoc logging gets the job done early on, but as systems grow it becomes inconsistent, hard to maintain, and easy to accidentally omit in new code paths.

A @monitor decorator wraps functions with structured logging that automatically captures execution time, input summaries, output characteristics, and exception details. It integrates cleanly with popular logging frameworks, Prometheus metrics, or observability platforms such as Datadog.

Under the hood, the decorator timestamps both the start and end of execution, logs any exceptions before re-raising them, and optionally pushes metrics to a monitoring backend — all without modifying the underlying function.

The real payoff comes from applying this decorator consistently across the entire inference pipeline. The result is a unified, searchable record of predictions, latencies, and failures. When something goes wrong in production, engineers have actionable diagnostic context rather than incomplete logs and educated guesses.

Closing Thoughts

All five decorators discussed here share a common philosophy: keep core machine learning logic clean by pushing operational concerns to the edges. This separation improves readability, simplifies testing, and makes codebases significantly easier to maintain over time.

The practical advice is straightforward — start with the decorator that addresses your most pressing production challenge. For most teams, that means retry logic or monitoring. Once you experience the clarity this pattern brings to a codebase, it quickly becomes the default approach for managing operational complexity.